An operator-grade recipe for counter-UAS in low light: make infrared the primary, let RGB assist, and ship it on real edge boxes.

Tyler Gibbs

Author

If you've ever tried to track a palm-sized quad at night, you know the demo gods aren't kind. RGB looks great at noon; after sunset, thermal (IR) quietly carries the team. This post makes the case for an IR-first pipeline—with RGB as a helpful sidekick—not the other way around. It's based on brand-new benchmarks and detectors from 2025, plus hard lessons from deploying on sub-15 W edge boxes.

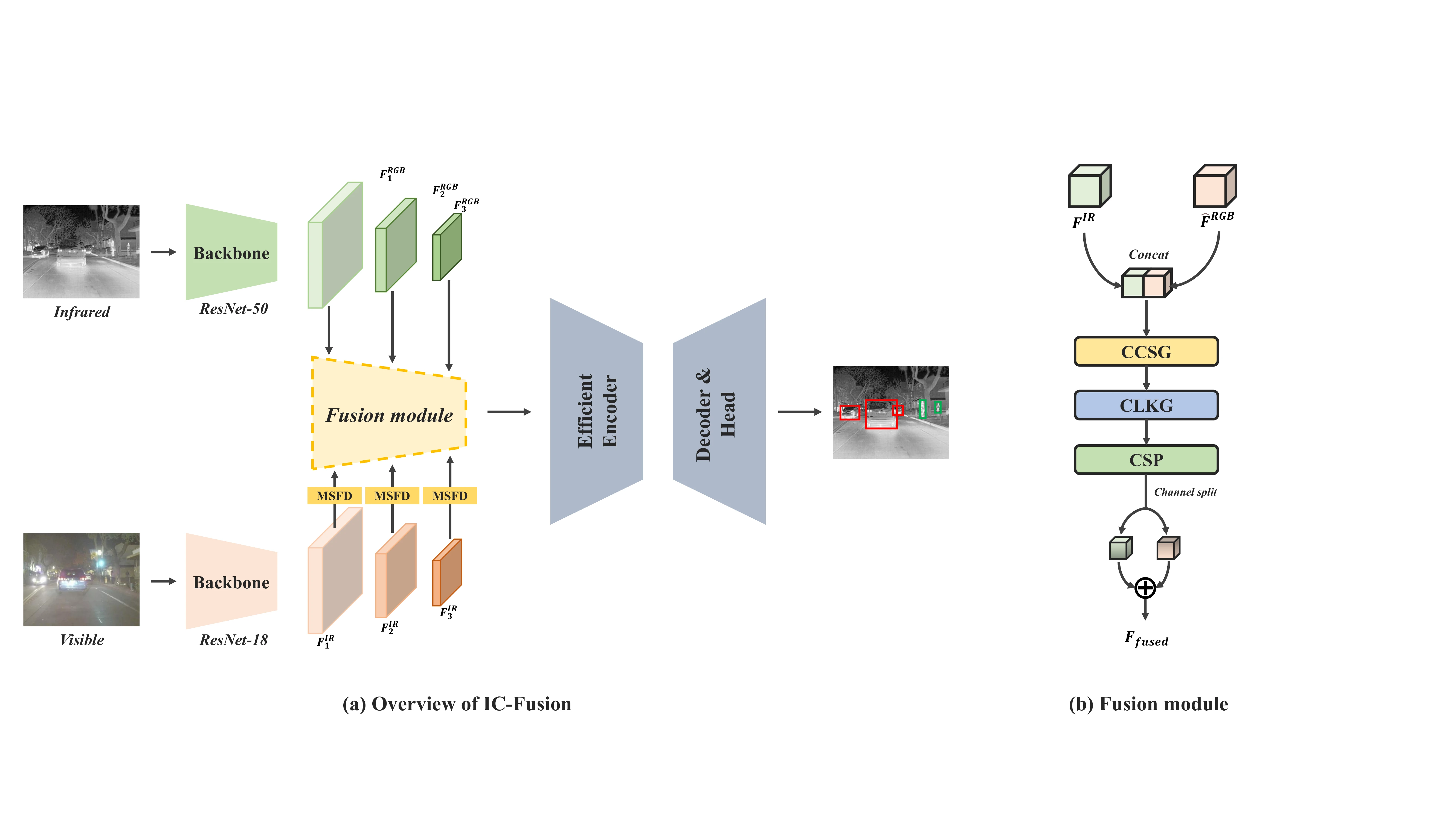

Most multispectral posts treat IR as a "booster" for RGB. But new work shows IR-centric fusion improves detection when the target is tiny, fast, and low-contrast—exactly the counter-UAS regime at dusk/night. A recent infrared-centric multispectral DETR (IC-Fusion) explicitly structures cross-modal fusion around the IR stream and reports strong results on night/low-light sets. That's the cue to architect for IR-first rather than RGB-first.

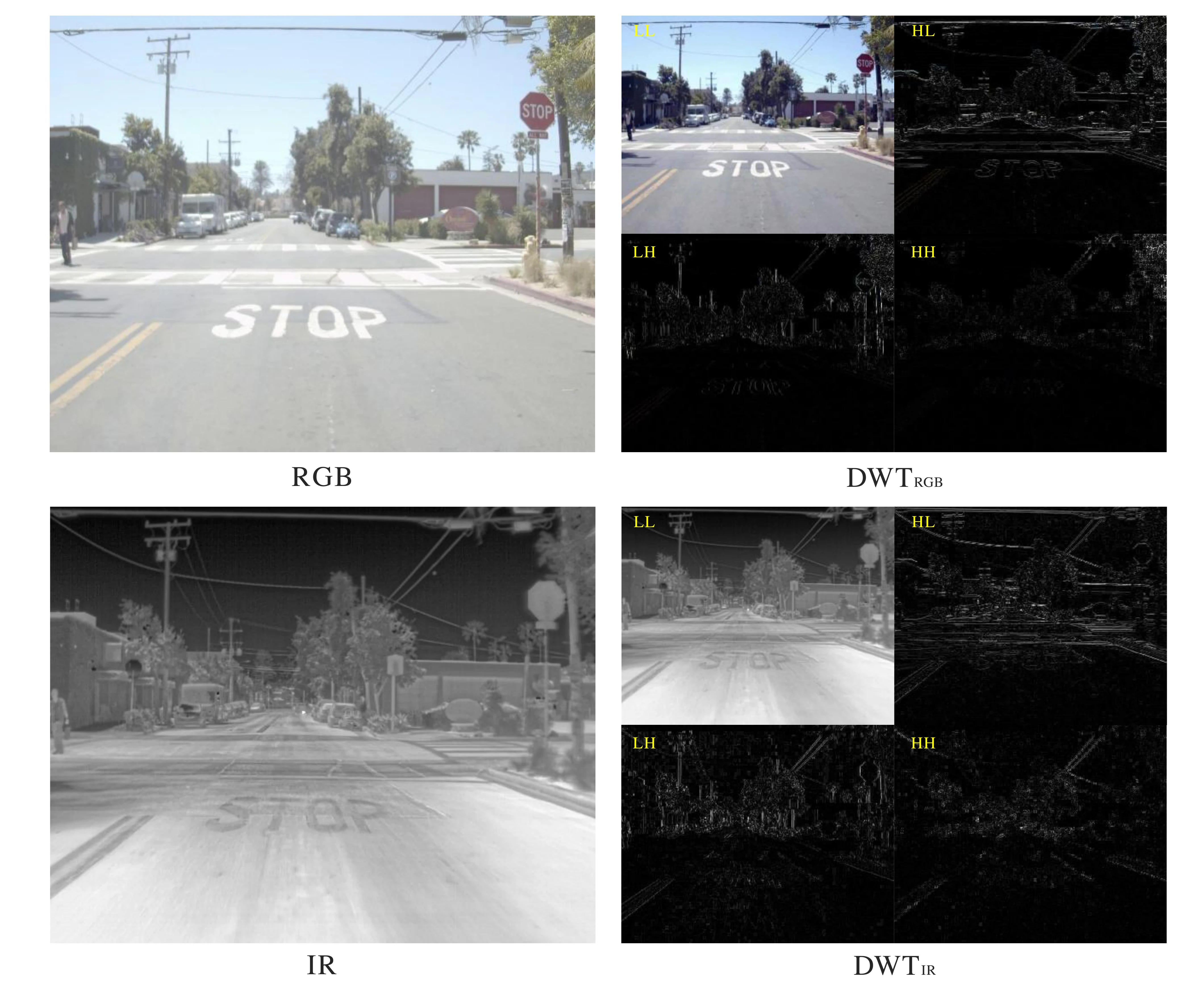

Wavelet decomposition reveals that IR images contain structurally rich high-frequency information (LH, HL bands) critical for object localization, while RGB primarily encodes low-frequency semantic structures.

Wavelet decomposition reveals that IR images contain structurally rich high-frequency information (LH, HL bands) critical for object localization, while RGB primarily encodes low-frequency semantic structures.

Also: the community finally released CST Anti-UAV, a thermal SOT benchmark focused on tiny drones in complex scenes. State-of-the-art trackers that look heroic on older sets slump to ~36% state accuracy here—proof that "night + tiny" stays brutal unless you design for it.

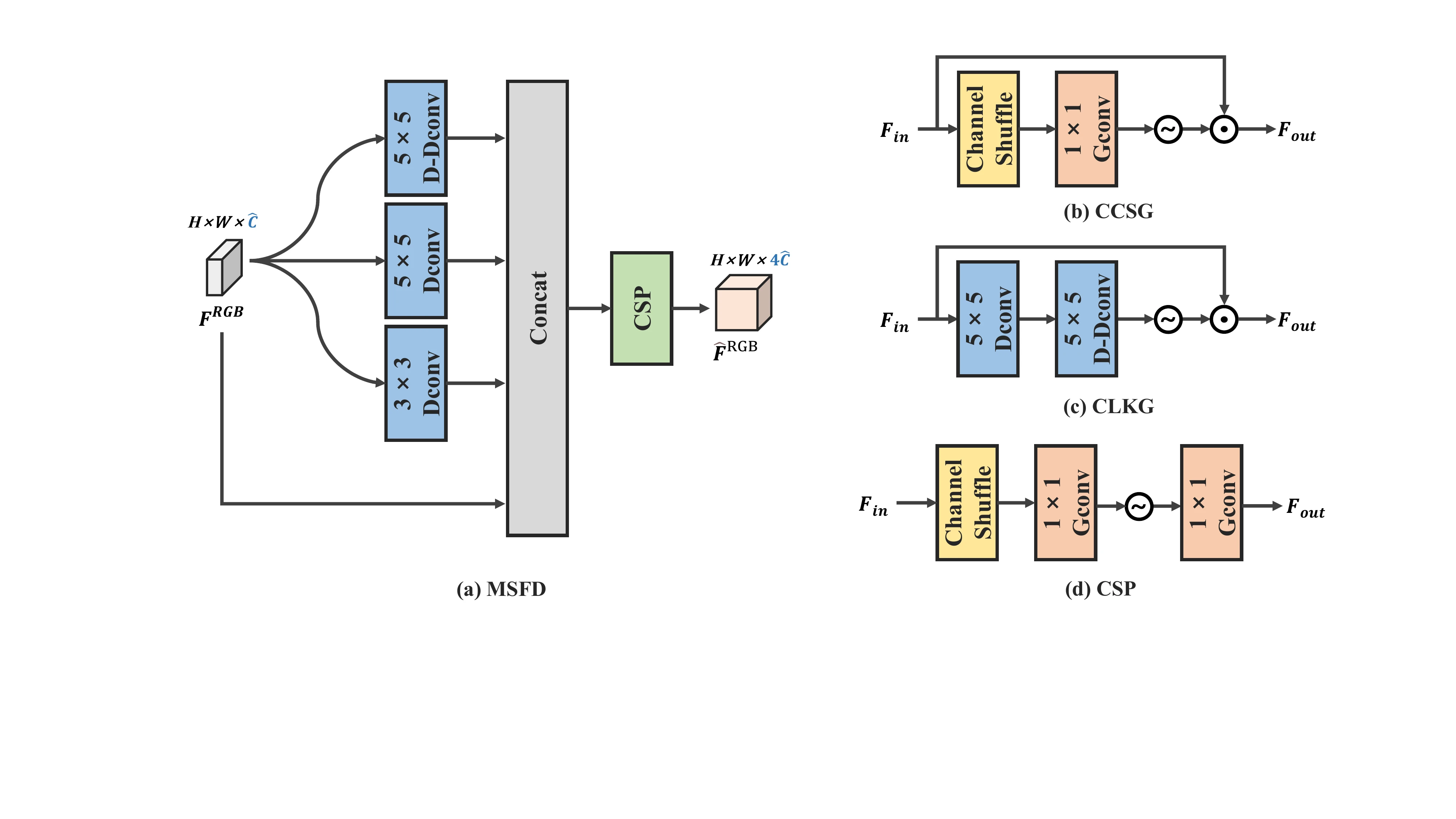

IC-Fusion components: Multi-Scale Feature Distillation (MSFD) enhances RGB features, while Cross-Modal Channel Shuffle Gate (CCSG), Cross-Modal Large Kernel Gate (CLKG), and Channel Shuffle Projection (CSP) enable effective cross-modal interaction.

IC-Fusion components: Multi-Scale Feature Distillation (MSFD) enhances RGB features, while Cross-Modal Channel Shuffle Gate (CCSG), Cross-Modal Large Kernel Gate (CLKG), and Channel Shuffle Projection (CSP) enable effective cross-modal interaction.

Small hot specks in clutter love to trigger false positives. AA-YOLO's trick is simple and useful: put a statistical anomaly test into the YOLO head to tame backgrounds without heavy compute. Great leverage for low-power deployments.

A single crisp bbox is not victory. On CST Anti-UAV, long, uninterrupted custody is the real score—state accuracy and continuity fall off fast for tiny targets. Favor trackers that stabilize ID under scale changes and clutter; report ID-F1/MOTA and false alarms per hour, not just mAP.

Your node will be power/thermal-limited and offline sometimes. Budget for INT8/FP16, single-batch latency, and thermal headroom on Jetson-class modules (Orin Nano/NX/AGX). Expect 10–40 W envelopes in the field and plan your FPS accordingly.

The IC-Fusion framework prioritizes infrared features with a deep IR backbone while using a lightweight RGB backbone. The cross-modal fusion module efficiently integrates complementary information for robust multispectral detection.

The IC-Fusion framework prioritizes infrared features with a deep IR backbone while using a lightweight RGB backbone. The cross-modal fusion module efficiently integrates complementary information for robust multispectral detection.

Sensors → LWIR (primary) + RGB (secondary) Detect → Thermal-tuned detector (CRT-YOLO), optional AA-YOLO head for background control Fuse → IR-centric transformer head (IC-Fusion) with cross-modal gates Track → Lightweight SOT/MOT with scale-aware updates; export track continuity + ID confidence (not just boxes) Decide → Thresholds on false-alarm/hr and continuity ≥ N s to trigger alerts/handoffs Deploy → INT8 engine on Orin; watchdog for thermal throttling; offline-first logging for post-mission evals

Qualitative comparison on FLIR dataset: RGB-only misses low-contrast targets, IR-only provides robust detection, and IC-Fusion combines the best of both modalities for superior performance in challenging conditions.

Qualitative comparison on FLIR dataset: RGB-only misses low-contrast targets, IR-only provides robust detection, and IC-Fusion combines the best of both modalities for superior performance in challenging conditions.

If your mission is find it, keep it, and don't cry wolf at night, build for thermal-first detection, fuse RGB into IR (not the other way around), and size the whole thing for bounded-latency edge. That's how you get a counter-UAS stack that actually holds up after sunset.

For more on deploying AI systems to edge environments with strict resource constraints, read our post on Edge AI Deployment.

This post draws heavily on recent research in infrared-centric multispectral object detection:

IC-Fusion: Multispectral Detection Transformer with Infrared-Centric Feature Fusion

The IC-Fusion framework demonstrates that prioritizing infrared features with a deep IR backbone and lightweight RGB backbone, combined with novel cross-modal fusion modules (MSFD, CCSG, CLKG, CSP), achieves superior performance on FLIR and LLVIP benchmarks while maintaining computational efficiency for edge deployment.

More insights on AI engineering and production systems

We build AI systems for defense and government operations. Let's discuss your requirements.